Companies that rethink and reform their outbound distribution networks gain new sources of revenues, greater sustainability, a more resilient supply chain, and significant competitive advantages over slower-moving rivals.

The impact of supply chain disruption on the global chemicals industry has been severe, affecting not only the inbound supplies of feedstocks needed for the chemicals that companies make but also the outbound distribution of those products to customers. And yet many chemical companies still haven’t made the effort to optimize their supply chains, especially their outbound distribution activities, even though they have considerably more control over them than they do over their inbound supply chains. The failure to optimize these outbound activities can pose serious risks for chemical companies, especially as the market for chemicals becomes increasingly commoditized and customers can switch easily to cheaper, more responsive producers.

Given that significant advances in digitization have generated considerably higher levels of efficiency and transparency, the time is ripe for companies to rethink and reform their outbound distribution networks—not just in the chemical industry but in every sector that faces similar challenges. The rewards of making such an effort can be considerable: significant competitive advantages over slower-moving rivals, new sources of revenues through a variety of value-added services, greater sustainability through reduced energy costs, and a more resilient supply chain overall.

The Outbound Opportunity

Outbound supply chains have a direct impact on chemical companies’ costs and service performance—which, in turn, directly affect customer satisfaction. And outbound chains tend to be more complex than inbound chains, given that the same narrow set of feedstocks must support a growing number, and increasingly wide variety, of products. What’s more, the amount and diversity of products, as well as the number of customers and end markets, tend to ramp up with every step in the value chain.

Optimizing outbound distribution chains, therefore, can significantly reduce costs—by as much as 20%, in our experience—while maintaining, or even improving, pre-optimization service levels.

Moreover, optimized, more cost-efficient supply chains are also more sustainable and resilient. Simply by transporting less material over shorter distances, and using the most energy-efficient transport modes, companies can reduce their carbon emissions per ton-kilometer shipped by up to 20%, even before any direct focus on emissions reductions. And by building more sophisticated, transparent, manageable, and agile supply chains, companies can protect themselves from the kinds of unforeseen disruptions that have plagued the industry recently.

Intelligent Distribution

Yet too many chemical companies have neglected their outbound distribution chains, despite the complexity of those chains and their direct impact on costs, customer satisfaction, and carbon footprints. Due in part to strong M&A activity, customer distribution networks have grown substantially, with little overall strategic direction. Company leaders have downplayed the relevance of those networks to the business, and they have been reluctant to invest in assets that they consider to be less strategic than those involved in production.

This is a mistake. In the past decade or so, new technologies have transformed supply chains. Thanks in part to the rise of the Internet of Things and big data analytics, supply chains now produce millions of vital data points. Material flows can be visualized, analyzed, optimized, and thus steered in a completely new fashion, right down to the single product and shipment level—even for global companies with hundreds of thousands of shipments every year.

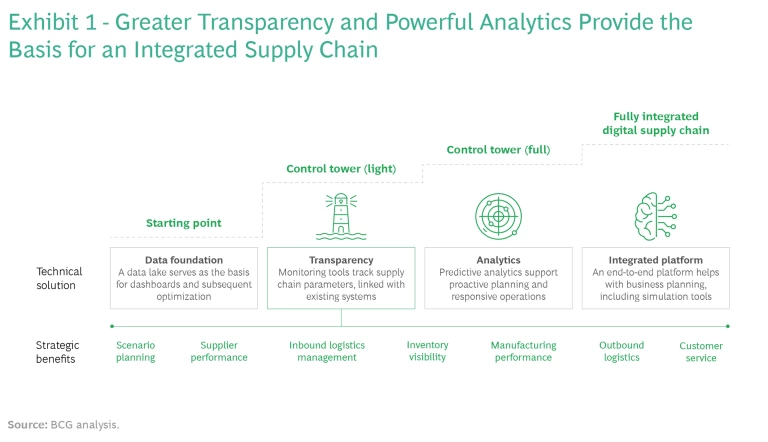

More recently, leading companies are beginning to use artificial intelligence (AI) to optimize supply chains. Digital twins of entire supply chains can be modeled on the basis of millions of single transactions and used to simulate multiple supply scenarios, while taking into account business constraints, such as labor laws, shipment costs per mode of transport, warehouse fixed and handling costs, and material flows. Sophisticated machine-learning algorithms predict supply and demand and shipment delays. Optimization algorithms based on heuristics or mixed-integer programming can help determine the best future setup of the supply chain and build the foundation for digital transformation. Even simple analyses frequently reveal quick wins. Built in stages, the result is a fully integrated distribution chain that provides end-to-end transparency, flexibility, and predictability. (See Exhibit 1.)

A digital model, however, will always remain a simplified representation of reality. So, it is important to focus not just on the technology and algorithms required but also on the people and work processes involved in making change happen in the real world. In our experience, companies that achieve successful AI transformations dedicate as much as 70% of the required investment to embedding AI in the organization, actively managing change, and building trust in the new technologies being implemented.

Companies that take advantage of these new levels of transparency and control can do more than improve their cost positions. They can also boost service levels, such as lead times and on-time-in-full performance; provide customers with real-time track-and-trace information; and offer the option to request smaller order quantities.

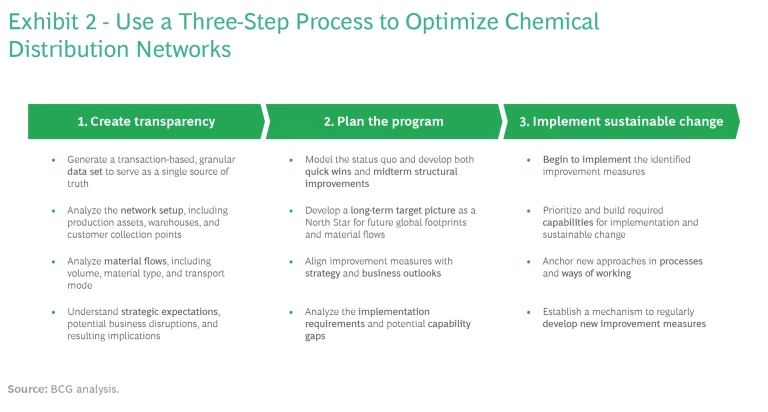

We advise companies looking to capture the benefits of a fully optimized outbound distribution chain to follow a three-step process, as shown in Exhibit 2:

- Create transparency. Integrate data from the various sources needed, build a data model, establish the current baseline, and analyze the changes required to capture the intended benefits.

- Plan the program. Determine the proper path for making the improvements needed, and make sure that it is linked to both the short-term and midterm optimization goals as well as the overall longer-term strategy.

- Implement sustainable change. Begin making the planned changes and monitor the impact; ensure that new approaches are embedded securely in processes and ways of working while remaining open to new methods and approaches.

Create Transparency

The first step in any distribution network optimization program is to ensure full transparency into the network’s current physical locations—production assets, warehouses, and customer collection points—and the material flows to and from these locations. This effort has three main objectives:

- Generate a consolidated, global data set encompassing all relevant costs, constraints, sites, and material flows, and apply AI to help reconcile data among disparate sources. The resulting data model must replicate reality as closely as possible. If the model contains only a subset of flows—such as the final delivery leg from a specific location to a specific customer, for example—then the analysis might focus on switching the source from one warehouse to another, which may increase performance and decrease cost on that particular leg. But previous legs—from the production plant to that warehouse—could become even less efficient.

- Analyze the current network—including production assets, warehouses, and customer collection points—on a consolidated map in order to understand the full complexity of current operations and generate insights into opportunities for optimization. While the optimization model can mathematically determine the best warehouse locations, for example, noting the location of warehouses on a map could reveal redundancies. Customer density information will indicate where the warehouse footprint is too dense or too spotty. And product flow data will show which routes are the main ones and where products may not be distributed to customers most efficiently.

- Understand the effect of all optimization levers in light of overall strategy and potential business disruptions, and then quantify and rank their impact. Because the personnel and financial resources needed to implement any planned changes are limited, it will be necessary to prioritize the optimization levers to maximize the return on investment and properly sequence their implementation. This is feasible only when the costs and benefits of each lever are clearly quantified.

The target state for end-to-end supply chain transparency is usually quite clear—far more so than the path to achieving it. Typically, a full and comprehensive data set will not be available, but it is also not a necessary prerequisite. This is where recent advances in data engineering and analytics can help, by allowing for the combination of very large and varied data sources into a consistent supply chain data lake. Common enterprise resource planning systems, for example, can provide shipment data to highlight material flows on a per-shipment level. Business data warehouses and other databases—containing order data, for example—can provide a comprehensive view on customer locations and demand. And asset databases can provide details on transport equipment, warehouses, tank terminals, and other physical assets in the supply chain.

All this data needs to be integrated and augmented, by geocoding company-owned, third-party, and customer locations, for example, and, when necessary, by approximating all internal flows of product volumes to generate a full end-to-end view. Careful validation is a must, because data from many different sources might be contradictory, incomplete, or falsely matched. Using AI techniques such as fuzzy logic can help to improve the matching of various data sets.

Once completed, the resulting data model forms the foundation for any further work. Creating it, however, must not be a one-off effort but rather a continuous process, with more and more data added over time. Ideally, automated pipelines to existing sources of data should be established and additional external data sources regularly identified and connected.

A final objective in creating full transparency involves analyzing the data model in light of the company’s overall corporate goals. This will help to generate a complete view of the strategic ambition for the distribution network and thus identify implications for its future setup and what’s needed to achieve it. Balancing multiple factors, such as achieving cost leadership versus increasing customer service levels and preserving product quality, may well be required. To that end, the data set should reveal the relationship between such factors, with the understanding, for example, that cost goals are unlikely to be achieved if the numbers of warehouses and inventory levels are increased.

Plan the Program

Once the company has gained full visibility into the current state of its distribution network and settled on its strategic goals, it’s time to begin planning for the network’s future shape. This process should follow a structured approach encompassing three specific time frames: quick wins, midterm improvements, and long-term targets.

Quick Wins. Plan to capture short-term improvements, within the existing supply chain, that will generate value with little or no investment. Quick wins are not intended to tackle major structural changes but rather to provide early evidence of the program’s potential for success, help fund the journey, generate support for the overall plan, build momentum among the program team, and boost confidence among business stakeholders.

Quick wins largely depend on the company’s specific setup, including its particular production footprint, warehouse locations, modes of transport, and product characteristics (such as hazardous versus nonhazardous chemicals). A typical quick win involves reductions in obsolete and slow-moving goods. Often, active inventory management is minimal, especially for low-volume products. Gaining transparency into stock and shipments at the product and warehouse levels will allow companies, for example, to segment products by volume share and order frequency and to develop location- and product-specific inventory targets that can account for the precise inventory needs of low-volume products.

Such shipment transparency is especially important for global chemical companies, given the current disruptions in global freight. Detailed shipment information is also critical to identify and improve so-called triangle shipments—from production to storage location A to storage location B to customer, for example. Geocoding, visualizing, and modeling each leg of such triangle shipments allow companies to quickly detect inefficiencies, identify unnecessary shipments through root-cause analysis, and determine more direct and cost-efficient routes, with the added benefit of reducing emissions.

Midterm Improvements. The next stage in the planning process involves midterm improvements, which should encompass the time frame of companies’ normal planning cycles, typically from three to five years. Because these improvements will require changes to the actual structure of the distribution chain, they should include the evaluation of the capital expenditures needed to fund the changes and the building of positive business cases. Thinking systematically about the entire distribution chain opens up the range of possible improvements considerably.

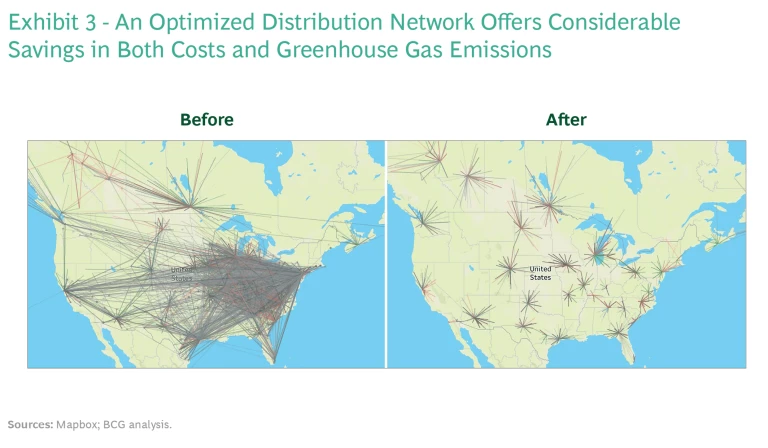

Chemical companies that have grown inorganically in recent years, for example, are no doubt aware that consolidation of warehouses would allow for significant savings. Instead of merely closing the warehouses with the highest storage costs, however, these companies should take a more sophisticated optimization approach. Assuming that their efforts to gain transparency into supply chain activities have been successful, they should have full visibility into the cost and the greenhouse gases (GHG) emitted during the last leg between warehouses and customers. This, in turn, should allow them to balance the economies of scale gained via warehouse consolidation with reduced proximity to customers, while taking into account considerations such as tariffs, geographical concentration versus diversification, and any associated changes in the supply chain’s continuity risk profile.

Using a demand forecast model based on machine learning and a center-of-gravity analysis to reveal the geographic origins of demand, companies can determine the optimal number and location of multiple warehouses within each region or demand cluster. Running multiple mathematical optimizations on a granular level will allow them to incorporate specific industry and product characteristics into the model, such as different requirements for commodity and specialty products or customer-specific needs.

To ensure that the results of the model are implemented with the least disruption to the business, companies will need to work out a detailed implementation plan, including the sequence of warehouse closures and shifts in volume shipped. (See Exhibit 3.)

While the cost benefits of such improvements will show up over time, the benefits in terms of reduced GHG emissions can be much harder to track. Companies should consider creating a control tower that monitors shipments and other supply chain activities at the single-transaction level, capturing data on their emissions and continuously tracking emissions reductions compared with a preestablished baseline.

Building a flexible optimization model that fully reflects the distribution network will also allow companies to adapt to evolving conditions, such as new-product introductions and significant changes in demand patterns, and to make the necessary adjustments to the network quickly and effectively.

Long-Term Targets. In addition to capturing quick wins and making plans for midterm structural improvements, companies should look further into the future, reimagining what their distribution networks might look like given the emergence of broad trends, such as the impact of electric vehicles on customer requirements. The idea is to define a North Star view of the future network in light of the conditions that could influence it over time. This exercise need not take immediate business constraints into account, but it must make sure that short-term and midterm supply chain initiatives do not conflict with the goals of the North Star vision.

It is conceivable that each region, division, or end market could develop its own independent North Star. Global alignment would be critical in that case. The target picture would need to cover all aspects of outbound logistics, including warehouse footprint, material flows and transportation modes, inventory management, and supply chain governance. Adjacent areas, notably production, as well as customer-facing service offerings should also be considered.

Companies should define a North Star view of the future network in light of the conditions that could influence it over time.

North Star exercises are usually less quantitative and data driven than efforts to develop specific improvement measures. Instead, they take larger structural changes into consideration, including:

- Potential regulatory constraints, such as trade tariffs and prohibited materials

- Expected market changes and fluctuating customer requirements, including increased localization of supply chains

- Changes in strategic direction that might affect the future product portfolio

Long-term targets should serve as unified goals across functions, business units, and responsibilities. Conducting a gap analysis between the current setup and the long-term North Star target will allow senior management to identify major improvement areas and prioritize the changes needed. Finally, a North Star analysis will help management track the progress of improvement initiatives toward long-term ambitions more broadly.

Implement Sustainable Change

Once the improvement plan has been designed, companies must start carrying out and monitoring the intended changes. At the same time, however, they must also build the capabilities needed to implement the changes smoothly and to allow for ongoing, sustainable improvement.

They should begin by assigning accountability for each improvement task, determining who should carry out it out along predefined action steps and measure its impact through specific milestones and implementation KPIs. Using specific individual milestones to track progress helps companies to easily measure success. But multiple milestones will likely need to be achieved before any impact on specific KPIs will be seen.

One milestone, for example, might involve the initiation of negotiations to rent a terminal at a harbor not previously used. But the effect of reducing both delivery times and logistics costs from the new terminal to customers can only be measured after multiple additional milestones have been passed and the new setup is fully implemented and running smoothly.

Tracking milestones and their financial impact, as well as regular reporting on progress, is best done through a central project management office (PMO). The PMO should act both to manage and control the realization of benefits within the planned time horizon and required resources and to support implementation teams in case of challenges.

Key to the effort will be for the PMO to enlist support on common challenges, including from:

- The business, for example to propose alternative shipping modes

- Subject matter experts, for example to ensure methodological consistency in the approach to data and its analysis

- Management, for example to properly prioritize implementation activities so as not to begin too many measures at once

Specific implementation measures should be designed and driven by the owners of the business or function where the necessary changes will occur. Only they have the knowledge and experience required to carry them out successfully, and they are the ones who must integrate the changes into their daily work routines.

New methods that enable companies to run the business in a more efficient and effective manner need to replace old ways and traditional thinking at all levels.

As with all critical operations, making major changes to the distribution network will require developing—or buying—new capabilities, including people-related capabilities, such as new knowledge and skills; supporting systems, such as optimization tools and supplemental data; and assets and hardware, such as real-time transport position monitoring devices and control rooms. Required capabilities will likely include both temporary implementation capabilities and permanent execution capabilities. Ideally, the specific capabilities needed during implementation (such as the ability to create and run a structural optimization model) will serve as the foundation for an execution capability (such as the ability to optimize product flows on an ongoing basis).

To sustain the changes made, it is critical to anchor them in daily ways of working. New methods that enable companies to run the business in a more efficient and effective manner need to replace old ways and traditional thinking at all levels. This includes both tangible work processes (such as regularly running quantitative optimization models and frequently benchmarking processes against competitors) as well as intangible, cultural changes (such as cross-functional collaboration and the assignment of responsibility for optimization efforts across functions and hierarchical levels).

The key to long-term success is continuous improvement. And the key to continuous improvement is a company-wide change in mindset—the willingness not just to own specific improvement measures and results but also to seek out new ideas and methods and to incorporate the best ones into regular ways of working.

With the right investments and approach, PMOs set up during the project often evolve into centers of excellence that can continue to support this cultural change by ensuring that continuous improvement is top of mind always and everywhere. As such, they can serve as the basis for each round of structural improvements, both after the initial wave of measures has been completed and when new competitive pressures, market opportunities, or advances in technology warrant a new round of structural optimization efforts.

Companies that optimize outbound product distribution networks, an all-too-often neglected aspect of the chemicals business, achieve real gains in cost, sustainability, and resiliency. The advent of the Internet of Things, big data, AI, and other digital technologies can help in this effort, significantly reducing complexity, accelerating value realization, and improving customer satisfaction.

The key is transparency. Using the digital tools now available, companies must lay the foundation for optimization through a thorough understanding of the network and how it operates. This, in turn, will give them the insights needed to plan for and carry out the necessary optimization efforts, both in the near term and in the future. Those that succeed will be able to serve their customers more effectively, at lower cost, and with a sustainability advantage over their slower rivals.

In short, it’s time to see outbound supply chains as a real competitive advantage and to make the moves needed to gain that advantage.