Retail banks are data businesses. Their value chains have always been supported by data, and a large part of their competitive advantage is based on better use of the information that data provides and the insights it originates. Banks, along with retailers and telecommunications companies, have long had more consumer data available to them than other businesses.

Consumers embraced digital channels for all manner of commerce well before many businesses, and banks were among the first companies to take advantage of new streams of data. A few were early movers, employing advanced data analytics, establishing dedicated teams, appointing chief data officers, and investing substantial time, effort, and resources in building out infrastructure and enabling data analysis.

All that said, The Boston Consulting Group’s work with leading retail banks around the world shows that despite the early start and formidable resources, most banks are far from realizing big data’s full potential.

Data and analytics today bring the ability to combine three elements:

- Vastly bigger volumes of data, including highly detailed data combined from different systems

- Much more insightful models, powered by so-called machine-learning software, which can make data-driven predictions and decisions

- More efficient technology, such as Hadoop software-hardware clusters, which are among the most cost-effective ways to handle massive amounts of both structured and far more complex unstructured data

Beyond the basic roadblocks that hold up companies in every industry, such as resistance to change and lack of qualified resources, banks have their own reasons for not having made more progress with big data. These include competing priorities, such as addressing regulatory changes in the wake of the financial crisis; IT complexity (because of multilayered systems and siloed data, banks rarely use the full breadth and depth of data at their disposal); and a combination of lack of overall vision and widely dispersed and loosely coordinated efforts, which result in suboptimal allocation of human and technical resources and limited interaction and exchange of ideas. In addition, because banks often work with aggregated data served up by their systems, they do not always appreciate the potential that is embedded in the rich precision and detail of the data they possess.

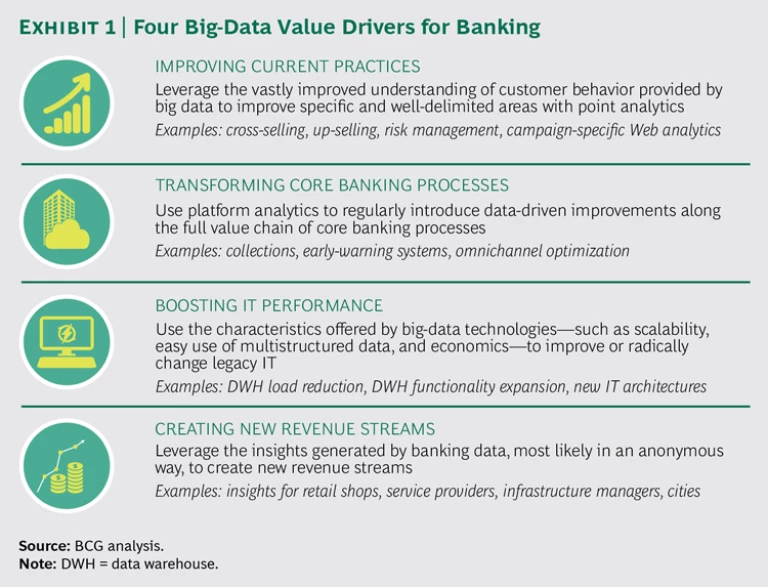

There are at least four areas in which focused and coordinated big-data programs can lead to substantial value for banks in the form of increased revenues and bigger profits. (See Exhibit 1.)

Improving Current Practices with Point Analytics

One of the simplest—and most powerful—applications of data analytics is the development of point solutions for individual needs and issues while steering clear of other areas. Big data can be used to improve the assessment of customer risk in a particular context, for instance. Data analytics can also be employed to more effectively measure marketing potential.

One large European bank, for example, used a combination of point solutions to upgrade its credit underwriting and pricing and to enhance the effectiveness of cross-selling and up-selling campaigns. The bank had been running campaigns to increase the share of high-end (gold and platinum) credit cards in its portfolio. It had been using both risk assessment and marketing analytics based on aggregated data to preapprove current standard-card customers and target potential new clients. Its transformation rate was an unimpressive 3% to 5%.

We helped develop a series of advanced-analytics models that can process far more detailed customer information—including data collected at the transaction level and compiled from multiple sources—related to credit risk, behavior, card use, and purchase patterns for other products and services. Using this data and the new models, the bank generated an entirely new series of risk and targeting scores. After a few adjustments were made on the basis of test campaign results, the new scores were applied to the bank’s full portfolio of card-marketing programs. Uptake surged fivefold to an average of more than 20%, and the bank generated tens of millions of euros in new revenues—without incurring the excessive costs often associated with new-client acquisition in saturated European banking markets.

Transforming Core Processes with Platform Analytics

Retail banks use data-driven reasoning in many of their core processes, such as new-product development, customer relationship management (CRM), and product pricing. But most banks use data that they are already capturing, such as structured data from accounting and reporting systems or other conventional internal sources, and they apply analytics to only a limited number of points in their core processes. More advanced banks have built a platform analytics capability that collects and analyzes not only internal multistructured data but also data from external sources. The internal data takes various forms and is often sourced from new digital channels and media. It can include, for example, customer interaction logs from the bank’s website, voice logs from call centers, and smartphone interaction logs. Additional data is collected from other sources, such as external databases, geolocation analyses, public websites, and social media. These banks develop insights and apply them at every information-exchange point of their core processes.

Platform analytics helped a large U.S. bank substantially improve the performance of its end-to-end collections process. Following the financial crisis, the bank faced two new challenges:

- Unprecedented volumes of customers who had never before been delinquent but now faced financial problems

- An increasing number of financially stretched customers who were juggling multiple credit-card accounts and credit lines—and deciding which cards or lines to let slip into default and which to keep in good standing with regular payments

The bank’s challenge was to identify at-risk accounts as early as possible, assess the borrowers’ capacity to pay, evaluate their willingness to pay (a totally new behavioral characteristic), and match borrowers with restructuring and rehabilitation programs that suited each borrower’s specific circumstances.

Data analytics were able to improve every step of the collections process, from early identification of delinquency to treatment selection to foreclosure—even to external-recovery channel management. By combining structured and unstructured data from internal and external sources—including a number of sources that were previously untapped—into new behavioral models, the bank was able to develop programs that were tailored to customers’ financial situations and predispositions. Big-data technologies also delivered accurate information about customers with outdated contact details, allowing the bank to increase effective outreach by more than 30%. The bank developed a new valuation approach for files in distress, which allowed the institution to more accurately reprice the portfolios of nonperforming loans for sale to external collectors.

Perhaps most significant, big data helped the bank better understand both the quality of the credit files coming into the collections process and the performance drivers of its collectors—as well as the interplay between the two. This yielded some surprising results. Established practices were built on the assumption that to maximize total collections, the most difficult files should be allocated to the best collectors. But an advanced-analytics analysis of the criteria used to determine which files were difficult and which were easy showed that allocating the easy files to good collectors actually maximized the number of files processed and, eventually, yielded a higher volume of collections.

As a result of the redesigned collections process and the optimization of each step, the bank increased the funds it collected by more than 40%, resulting in savings of hundreds of millions of dollars in bad debts that it would otherwise have written off.

Boosting IT Performance

Big-data IT technologies can both improve the capabilities and reduce the costs of bank IT systems. Linear scalability, in which banks buy only the hardware or software capability that they actually need; the use of inexpensive commodity-hardware components, especially for tasks that are computationally intensive; and the ease of manipulation of multistructured or unstructured data are all big steps forward for most financial institutions.

Banks can leverage these characteristics in several ways. These include efficiently processing the vast amounts of data generated by the omnichannel customer journeys common today; implementing more sophisticated, data-intensive models; and doing a better job of balancing the workloads of data warehouses that often operate close to saturation levels, thereby avoiding expensive upgrades.

A large European bank, for example, recently faced a conundrum with respect to its plans for a new data warehouse and CRM systems: the functionalities requested by the bank’s business units far exceeded the budgeted capacity of the new system, which was a traditional, though state-of-the-art, data warehouse. A review of the bank’s data storage and manipulation needs sparked the insight that led to a different—and much less costly—solution. The bank identified a series of applications using unstructured or multistructured data from various digital channels. Because traditional systems are not well suited to processing this type of data, they consume excessive calculation and storage resources. A new, hybrid data-warehouse architecture, combining traditional and big-data technologies and running on clusters of Hadoop commodity servers, accommodated all the functionalities needed by the business units and produced savings of almost 30% of the initial budget.

Creating New Revenue Streams

Companies in multiple industries are generating entirely new revenue streams, business units, and stand-alone businesses as a result of the information provided by the data they hold. (See “Seven Ways to Profit from Big Data as a Business,” BCG article, March 2014.) Banks are no exception; indeed, their vast volumes of data give them opportunities for customer insights that other businesses can only imagine. The challenge for banks is in using and manipulating data in ways that respect customer trust and privacy. The EU has promulgated especially stringent regulations in this regard. (See Earning Consumer Trust in Big Data: A European Perspective, BCG report, March 2015.)

Despite these constraints, several retail banks have found ways to monetize insights (as opposed to data) generated through their core activities by making customer data anonymous and aggregating or packaging it in ways that are valuable to other companies.

In one example, a leading European retail bank used data from its payment-card unit to build a digital dashboard for restaurants and bars. The dashboard displays high-level, aggregated information about each establishment, including the age and revenue brackets of customers, the behavioral segments to which customers belong, and whether they are first or repeat customers—information that restaurants could use to better serve, and sell to, their patrons. Restaurants were quick to recognize the dashboard’s value: it achieved penetration of more than 50% of the bank’s restaurant clients in just a few months. The bank projects new revenues of €50 million with a profit margin of about 40%—and that’s after paying for the bank’s new big-data system. The bank has since launched several similar initiatives.

Getting the Most from Big Data

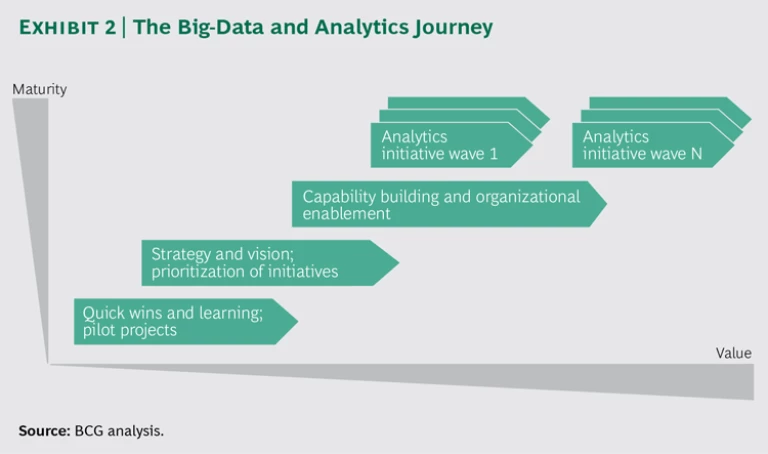

Data and analytics are powerful tools, but they are also complex, requiring technology, technical expertise, organizational and resourcing support, and, quite often, a test-and-learn approach to capitalize on their potential. It takes time to build, staff, test, adjust, and perfect big-data programs so that they function at full potential. For banks, as for other companies, big data is a journey. (See Exhibit 2.)

Most banks have already run pilot or proof-of-concept projects, and rightly so. This is the best way to validate the potential, identify issues, and get the first quick wins from big data. Speed and agility are crucial in creating big-data applications. Short cycles, iterative development, and frequent pilots should be the rule. Risk taking should be encouraged and mistakes accepted. Big data is often uncharted ground, and even disappointment—or, at least, carefully analyzed disappointment—can be a good teacher. Since companies can evolve and mature, even after an imperfect start, most banks will be able put themselves on the road to high-impact big-data success. We have created a basic roadmap to follow. Assess your current situation. Most banks are already using big data, sometimes even without knowing it. Every retail bank has teams that use data and relatively advanced analytical techniques in everyday tasks, such as risk assessment and pricing and campaign management. And most banks have started experimenting with the new big-data technologies. More often than not, however, these efforts are carried out in a piecemeal and uncoordinated fashion. Even more often, data governance is administered on an ad hoc basis and based on purely technical, not business-related, considerations. In many cases, banks also fail to integrate new analytical opportunities and roles and responsibilities to create more data-driven, customer-centric organizations.

It is paramount for a bank to run a thorough diagnostic of its current data and analytics situation to identify the areas and capabilities in which it is close to achieving its desired state (or to aligning with the current state of the market) and those to which it needs to devote attention.

Develop a big-data vision. In our experience, the next step is the one that causes many banks to falter: moving beyond the diagnostic stage and building a vision of the role that data will play in the value chain, which includes identifying and prioritizing future applications and opportunities and evaluating the capabilities that the bank needs in order to successfully implement its plan. Too often, banks take a narrow view of the opportunities and capabilities necessary to succeed. The most innovative—and potentially most lucrative—opportunities usually are not readily apparent. That vision also shapes the role and place of big data in the organization and helps determine budgets, staffing, and organization structure. Strong sponsorship at senior levels sends a signal to the rest of the bank that top management attaches high importance to data and analytics.

Banks need to create an environment in which novel applications—ideas that truly differentiate a company from its competitors—can be quickly identified and developed. The exploration of new data applications should be encouraged at all levels of the organization, with employees given time and resources to pursue their ideas.

Bring the organization along. Ensuring widespread success means overcoming organizational inertia and skepticism. It’s hard to overstate the importance of this step. The wide range of expertise needed to identify and develop applications will require the skills of many individuals across the company. It’s vital, therefore, to create strong links among professionals who may well have very different backgrounds and very little experience in working with one another. Frequent dialogue and ongoing collaboration will help these interdisciplinary teams zero in on, and prioritize, the most relevant business problems and opportunities. Formal processes can spur this kind of collaboration, as can a more informal push from the top. Establishing a clear roadmap for success that focuses not only on building capabilities but also on continually demonstrating the value of big data is essential to achieving buy-in and building momentum.

Cultivate the critical capabilities. Similarly, banks need to recognize that the requisite big-data capabilities are not limited to high-price, state-of-the-art hardware and software plus a team of data scientists. All too often, the inability to recognize the breadth of the capabilities required hinders the organization’s data enablement and restricts the impact of big data to a few very specific, and often limited-impact, areas. Banks end up building small pockets of excellence but fail to instill in their organizations an appreciation of the power that big data can bring.

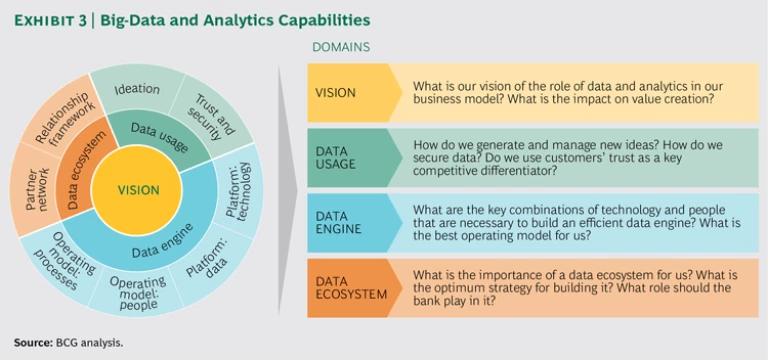

Big data capabilities fall into three domains:

- Data Usage. How does the bank generate and manage new ideas? How does it secure data? Does it use customer trust as a key competitive differentiator?

- Data Engine. What are the key combinations of technology and people necessary to build an efficient data engine? What is the best operating model for each particular bank?

- Data Ecosystem. Who are the partners, and what are the relationships that a bank needs? Which roles are internal, and which are external? What is the optimum strategy for building the ecosystem? What is the bank’s own role in it?

Banks need to address all three domains as they move from vision to execution. (See Exhibit 3. See also Enabling Big Data: Building the Capabilities That Really Matter, BCG Focus, May 2014.) These capabilities need to be built by completing specific, discrete projects with measurable business cases and clear milestones. Large foundational programs that take years to deliver business value—if they ever do—should be avoided.

Working on data and analytics requires compiling the right mix of skills early on, with dedicated resources working in multidisciplinary teams that combine businesspeople, data scientists, and IT experts. The teams should be tightly linked units that are core to the business. Last but not least, banks need to understand that operating pace is key: it is not so much what you do, but how fast you do it. The focus of banks and their big-data teams needs to be on the speed to market from idea generation to final implementation. Building the ideal organization structure is less important than working cross-functionally and integrating data and analytics into day-to-day business processes, with the goal of rapidly generating tangible value.

For retail banks, big data is already big business. But for many, it can be much bigger still, as the volume and depth of the available data grow, analytical models improve, and the sophistication of banking executives and data scientists increases with experience and success. There is no bigger playing field for big data than banking. Banks that raise their game first will not only reap immediate financial rewards but will also establish data and analytics capabilities that will be hard for competitors to overcome.

Acknowledgments

The authors would like to thank Astrid Blumstengel, Julia Booth, Ravi Chabaldas, Nicolas Harlé, and Claire Tracey for their contributions to this report. They would also like to thank David Duffy for his help in writing the report and Katherine Andrews, Gary Callahan, Lilith Fondulas, Elyse Friedman, Kim Friedman, Abby Garland, and Sara Strassenreiter for their contributions to the report’s editing, design, and production.