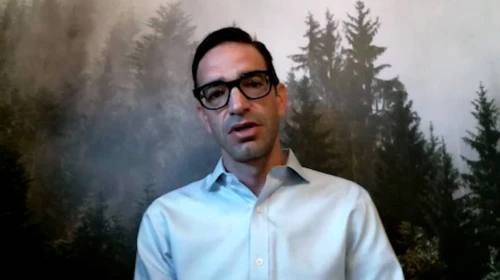

Steven Mills

Managing Director & Partner, Chief AI Ethics Officer, Global Leader, BCG Center for Digital Government Washington, DCEducation

- MSc, operations research and forest management planning, Pennsylvania State University

- BSc, wildlife and fisheries management, Frostburg State University

Honors and Awards

- Top 100 Most Influential People in Data, DataIQ, 2022

- Outstanding Achievement in the Field of AI Ethics nominee, CogX Awards, 2021

- Named one of Forbes 15 AI Ethics Leaders Shaping the Future, 2021

Steven Mills is the Global Chief AI Ethics Officer at Boston Consulting Group. He also serves as the global leader for BCG's Center for Digital Government, where he guides the firm's digital work within the Public Sector practice.

Since joining BCG, Steve has supported a wide range of private and public sector clients in health, finance, aerospace, social impact, technology, and defense. His technical leadership includes AI product development, implementing complex GenAI and machine learning use cases, and providing decision support through large-scale modeling and simulation.

Steve’s leadership brings commercial best practices into government, including designing AI and analytic strategies, AI workforce development, and planning and executing AI training programs. He is a recognized expert in responsible AI, helping a variety of public and private sector clients develop responsible AI strategies, implementation plans, AI/GenAI and agentic AI test and evaluation capabilities, and tools and training. He is responsible for developing and overseeing BCG’s own responsible AI program, which has been recognized by the Financial Times (2023) and the Responsible AI Institute (2022).

Steve is an invited member of the World Economic Forum’s Global AI Governance Alliance, a group composed of ministers and heads of regulatory agencies, chief executives, and leading technical and civil society experts who provide strategic guidance and shape the direction of the World Economic Forum’s Centre for the Fourth Industrial Revolution. His expertise is regularly sought by media outlets including WSJ, Fortune, Forbes, Bloomberg, and CNN.

Prior to joining BCG, Steve spent eight years at Booz Allen Hamilton where he served as the Director of Machine Intelligence and the Director of Booz Allen Futures, a business unit focused on exploring the atypical intersections between emerging technology and socioeconomic, environmental, and geopolitical trends. Prior to that, he worked as a forest planning/operations research analyst at FORSight Resources.